Are Knowledge Graphs useful for the future of AI?

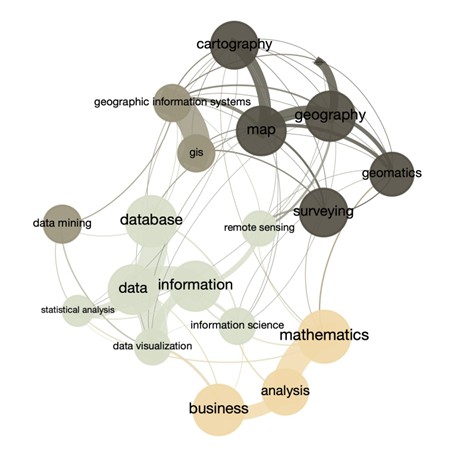

Figure 1! A graph of a subsurface data management process illustrate the relation between the geosciences activities.

Introduction

Large Language Models (LLMs) are now used across nearly every domain and deliver value to billions of people. Every day, they impress us with their remarkable capabilities and make dreaming about the creativity of the teams who have shaped them.

Yet, when we try to build AI agents powered by LLMs, we quickly encounter their limitations. It seems that there is a clear gap between having an extraordinary algorithm that predicts the next word and having a system capable of predicting the next action to take autonomously.

This limitation is not surprising. As humans, our ability to act in the real world depends on the internal representation we build of that world. This representation comes from a combination of personal experience and structured learning from parents, teachers, books, television, and now the internet.

LLMs, however, learn only from the internet. The world model they derive from online text is excellent for generating a LinkedIn post, but far less useful for guiding an autonomous agent that must decide what to do in the real world.

To address this gap, many researchers have returned to an older idea from the early days of NLP: Knowledge Graphs. At first glance, this may seem surprising. Knowledge graphs are not new, and despite decades of work by linguists and domain experts, they never delivered breakthroughs in translation, content generation, or any of the tasks that modern LLMs now perform with ease. They were also expensive to build and maintain. So how could a concept from 20th‑century linguistics help us today?

At AgileDD, we consider that he answer may lie in the architecture of future AI systems. The next generation of AI could be hybrid—combining LLMs, structured knowledge, and symbolic reasoning—with Knowledge Graphs playing a central role and the Human-In-The-Loop approach remaining at the core of trustable models.

Could the future of AI be LLM-only AGI?

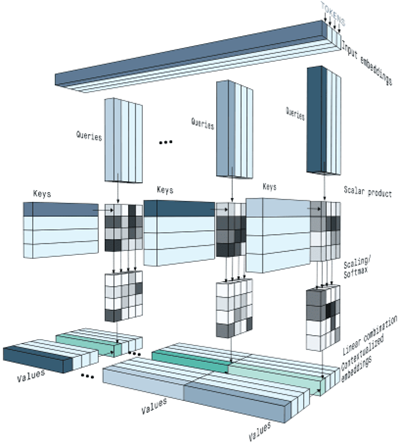

Figure 2: An illustration of the attention mechanism which makes the LLM so powerful.

The question of whether future AI systems can rely solely on LLMs—or whether they must incorporate structured knowledge—has become one of the most active debates among data scientists. To begin, it is important to acknowledge the strongest arguments from those who believe that Knowledge Graphs are unnecessary, and that relying on them could even lead AI development down an unproductive path.

First, LLMs already encode a vast amount of implicit knowledge. Being trained on the entire internet, major digital libraries, and countless other sources is far from trivial. With billions of parameters, LLMs internalize rich relational structures that function like latent knowledge graphs. From this perspective, adding explicit KGs may appear redundant.

Second, over the past 18 months, LLMs have demonstrated emergent reasoning abilities—chain‑of‑thought, tool use, planning behaviors—that arose without any symbolic scaffolding. This is appealing because Knowledge Graphs require ontologies and schemas that must be curated manually. Such curation is costly, difficult to scale, and often struggles with ambiguity, context, and exceptions—areas where LLMs excel.

For proponents of the LLM‑only approach, AGI will emerge through scaling, reinforcement learning, and architectural improvements. In their view, Knowledge Graphs are unnecessary and may even slow progress. They often point out that the human brain does not store knowledge as explicit graphs, but rather as distributed representations—a structure that resembles the embedding spaces used by LLMs.

Why Knowledge Graph Are Increasingly Likely to Improve AI ?

A growing number of leading researchers—including Yann LeCun—have taken a clear and uncompromising position: LLM‑only systems cannot reach AGI. LeCun argues that current LLMs represent a technical dead end for achieving human‑level intelligence, and that fundamentally new architectures will be required.

Supporters of this view highlight several structural limitations of LLM‑only systems:

- LLMs cannot build or maintain long‑term context.

- LLMs cannot learn continuously from interaction.

- LLMs cannot reason, plan, or explain using causal structures in a human‑like way.

It would be inaccurate to claim that everyone skeptical of LLM‑only AI is advocating for hybrid LLM + KG architectures. However, the direction of the major AI laboratories is unmistakable: they are all moving toward hybrid systems, even if they do not always use the term “knowledge graph.”

Examples include:

- DeepMind’s AlphaCode 2 → retrieval + structured reasoning

- OpenAI’s o1 models → external memory + tool use

- Microsoft’s GraphRAG → explicit integration of LLMs with graph‑structured knowledge

- Meta’s LLaMA agents → planning modules + memory stores

In this shift toward hybrid architectures, Knowledge Graphs offer several critical advantages. They align perfectly with the AgileDD mission: Automation for data, information and knowledge capture from unstructured documents with a Human-In-The-Loop approach. They can provide ground truth, validated by humans, to reduce hallucinations. They can compensate for the limited context windows and fragile long‑term memory of LLMs. Specifically, KGs can deliver:

- Persistent memory

- Identity staking — the ability to anchor entities to stable, explicit, persistent identities, something LLMs cannot do reliably

- Stable world models — explicit relationships between entities, enabling the encoding of physical laws, business rules, and domain constraints essential for multi‑step reasoning

- Causal relationships — a foundation for explainability, one of the major weaknesses of LLM‑only systems

A common objection is the perceived cost of Knowledge Graphs. Historically, KGs required long and expensive sessions with linguists and domain experts to manually design Graphs of Schema and Graph of Data. But this is no longer the case. Today, AI can automate large portions of KG construction and maintenance using a combination of LLMs, extraction pipelines, and ontology‑learning techniques. Crucially, humans remain in control, able to validate and refine the output—making the process human‑centric rather than labor‑intensive.

By contrast, scaling LLMs to reach human‑level intelligence requires exponentially more compute, energy, and infrastructure, raising significant economic and environmental challenges.

Figure 3: A Graph generated by chatGPT 4.2. Microsoft GraphRag project

The debate is not only technical

But the current discussion about the architecture of future AI is not purely technical. It is also deeply philosophical. To make informed choices, we must confront several fundamental questions:

- What is intelligence? If intelligence is defined as the ability of generalized predictions, then an LLM‑only AGI might be sufficient. But if intelligence requires the ability to reason, to plan, and to make predictions grounded in long‑term memory, then Knowledge Graphs will play a critical role.

- What is knowledge? If knowledge can remain implicitly encoded in model weights, then larger LLMs will simply contain more of it. But if knowledge must be structured, explicit, and auditable—for regulatory, operational, or safety reasons—then Knowledge Graphs become indispensable.

- What is the role of humans in digital transformation? Knowledge Graphs also offer a way for humans to keep a hand on the wheel and continue steering the digitalization process. In this approach, advanced AI agents will be seen as powerful assistants, not as replacements for humans.

Conclusion

It is increasingly clear that Knowledge Graphs have a meaningful role to play in the pursuit of AGI. They offer grounding, reasoning, memory, reliability, and explainability—capabilities that LLMs alone cannot fully provide. Whether they will become a universal component of future AI systems is not yet certain, but the limitations of the LLM‑only approach are becoming more apparent.

What does seem likely is that the next generation of AI will rely on a hybrid architecture: a deliberate combination of LLMs, structured memory, and symbolic reasoning. In this emerging landscape, Knowledge Graphs stand out as the strongest candidate for the structured memory layer—the part of the system that preserves identity, context, and world understanding over time.

They may not be the entire solution, but they are poised to become an essential part of it.

This is precisely the future AgileDD is building.

Figure 4: Ai generated graphs not only improve the chat experience but also enable data-art!